Hello. This is David Aliaga. This is my second time writting for SenseTime's TECH blog. My profile is at the end of this post!

Prologue

Recently, I started playing with Yocto which is an open source project that allows you to build your own Linux system from scratch to booting into your embedded hardware. My goal was to run ROS on Yocto, but I encountered several hardships in the way, so this article supplements the missing steps in order to build an image successfully.

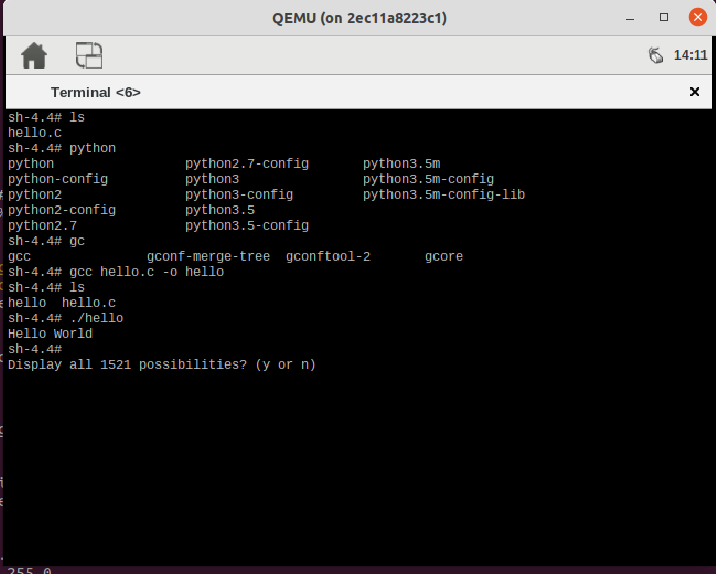

I didn't want to install things on my Ubuntu machine so instead I used Docker containers as Host to do my image building. To do this I used the excellent example of hubshuffle's yocto-docker-quickstart. I played a bit with it, and built the core-image-sato-dev image, but when I run it I was so dissapointed that I could only run python scripts in it but not C programs 🙁

But then , I built the core-image-sato-sdk and yeahh!!!! I could compile my first C "Hello World!" program! 😛

Life was good!

My primary objective though was to run ROS on Yocto, so I kept looking for a way to do that. And I found the meta-ros repository and I read its building instructions and I thought I would give it a try. This article is the memo where I write my experience and actually try to keep things a little clearer than what I found in the instructions already. [^1]

meta-ros on a Docker Image

The first I had to do was to rebuild the docker image I was using, since the prerequisites were not complete in the docker image I got from hubshuffle. I also changed the base image from Ubuntu 16 to 18. The new Dockerfile is

# this image augments Ubuntu 18.04 with the minimum necessary to run Yocto with support for QEMU and devtool

#FROM ubuntu:16.04

FROM ubuntu:18.04

MAINTAINER hubshuffle <hubshuffled@gmail.com`>`

`

#ENV TZ=Asia/Tokyo

# Upgrade system, add Yocto Proyect basic dependencies and extras

RUN apt-get update && apt-get -y upgrade && DEBIAN_FRONTEND=noninteractive TZ=Asia/Tokyo apt-get -y install kmod gawk wget git-core diffstat unzip texinfo \

gcc-multilib build-essential chrpath socat cpio python python3 python3-pip python3-pexpect xz-utils debianutils \

python3-git python3-jinja2 libegl1-mesa \

iputils-ping libsdl1.2-dev xterm curl vim tzdata

RUN apt-get -y install g++-multilib lsb-release python3-distutils time

# for tunctl (qemu)

RUN apt-get -y install uml-utilities

# for toaster

RUN apt-get -y install python-virtualenv daemon

# for package feeds

RUN apt-get -y install lighttpd

# for bitbake -g

RUN apt-get -y install python3-gi python-pip python3-aptdaemon.gtk3widgets

# Set up locales

RUN apt-get -y install locales apt-utils sudo && dpkg-reconfigure locales && locale-gen en_US.UTF-8 && update-locale LC_ALL=en_US.UTF-8 LANG=en_US.UTF-8

ENV LANG en_US.utf8

# install the necessary ip library

#RUN apt-get -y install iproute2

RUN apt update && apt -y install iproute2

# Clean up APT when done.

RUN apt-get clean && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/*

# Replace dash with bash

RUN rm /bin/sh && ln -s /bin/bash /bin/sh

# Install repo

RUN curl -o /usr/local/bin/repo https://storage.googleapis.com/git-repo-downloads/repo && chmod a+x /usr/local/bin/repo`

Here I replaced the base image because some package was unavailable for the previous one, and I solved some problem with tzdata stopping in the middle of building due to the interactivity. I also installed iproute2 because apparently it is not installed by default in Ubuntu 18. <-- too much talk. You can ignore this 😉

I built the new image calling this from the folder that contains the Dockerfile

docker image build -t custom_yocto .I was ready to enter then my container. So I modified the start.sh script (found here) in order to run the image I built and not the one by hubshuffle. (I just modified the name of the image to call) like this

if [ "${run_additional_instance}" = true ]; then

docker container exec \

-it \

--user yocto \

-w /opt/yocto \

custom-yocto \

/bin/bash

else

docker container run \

-it \

--rm \

-v "${PWD}":/opt/yocto \

--name kansai-yocto \

${arg_net_forward} \

${arg_x11_forward} \

${arg_privileged} \

--volume "${PWD}/home":/home/yocto \

custom-yocto:latest\

sudo bash -c "groupadd -g 7777 yocto && useradd --password ${empty_password_hash} --shell /bin/bash -u ${UID} -g 7777 \

yocto && usermod -aG sudo yocto && usermod -aG users yocto && cd /opt/yocto && su yocto"

fiWith that I was inside the container!

Setting things up

I tried to follow the building instructions so I did first created a new directory.

mkdir ros_workspace

cd ros_workspaceand then

git clone -b build --single-branch https://github.com/ros/meta-ros.git build

mkdir conf

ln -snf ../conf build/.mmmm, a symbolic link... clever 🙂

I was going for the ROS1 melodic distro and I thought to use the dunfell Open Embedded release so I set

distro=ros1

ros_distro=melodic

oe_release_series=dunfell

cfg=$distro-$ros_distro-$oe_release_series.mcf

cp build/files/$cfg conf/.Then I cloned the OpenEmbedded metadata layer and generated conf/bblayers.conf by doing

build/scripts/mcf -f conf/$cfgFinally I did the rest

unset BDIR BITBAKEDIR BUILDDIR OECORELAYERCONF OECORELOCALCONF OECORENOTESCONF OEROOT TEMPLATECONF

source openembedded-core/oe-init-build-env

cd -Now in the instructions they tell you to use a separate disk and that it would take almost 100GB. I am not going to say it didn't but in my case I had 100GB but I didn't use much of it. Instead I created a folder over /opt/yocto

mkdir cache-rosAnd then add the following content to the bottom of the file conf/local.conf

# ROS-ADDITIONS-BEGIN

# ^^^^^^^^^^^^^^^^^^^ In the future, tools will expect to find this line.

# Increment the minor version whenever you add or change a setting in this file.

ROS_LOCAL_CONF_SETTINGS_VERSION := "2.2"

# If not using mcf, replace ${MCF_DISTRO} with the DISTRO being used.

DISTRO ?= "${MCF_DISTRO}"

# If not using mcf, set ROS_DISTRO in conf/bblayers.conf .

# The list of supported values of MACHINE is found in the Machines[] array in the .mcf file for the selected configuration.

# Use ?= so that a value set in the environment will override the one set here.

#MACHINE ?= "<SUPPORTED-MACHINE>"

# Can remove if DISTRO is "webos". If not using mcf, replace ${MCF_OPENEMBEDDED_VERSION} with the version of OpenEmbedded

# being used. See the comments in files/ros*.mcf for its format.

ROS_DISTRO_VERSION_APPEND ?= "+${MCF_OPENEMBEDDED_VERSION}"

# Can remove if DISTRO is not "webos". If not using mcf, replace ${MCF_WEBOS_BUILD_NUMBER} with the build number of webOS OSE

# being used.

ROS_WEBOS_DISTRO_VERSION_APPEND ?= ".${MCF_WEBOS_BUILD_NUMBER}"

# These two are only used in the additions to conf/local.conf below:

ROS_OE_RELEASE_SERIES_SUFFIX ?= "-${ROS_OE_RELEASE_SERIES}"

# Because of a bug in OpenEmbedded, <ABSOLUTE-PATH-TO-DIRECTORY-ON-SEPARATE-DISK> can not be a symlink.

ROS_COMMON_ARTIFACTS ?= "/opt/yocto/cache-ros"

# Set the directories where downloads, shared-state, and the output from the build are placed to be on the separate disk.

DL_DIR ?= "${ROS_COMMON_ARTIFACTS}/downloads"

SSTATE_DIR ?= "${ROS_COMMON_ARTIFACTS}/sstate-cache${ROS_OE_RELEASE_SERIES_SUFFIX}"

TMPDIR ?= "${ROS_COMMON_ARTIFACTS}/BUILD-${DISTRO}-${ROS_DISTRO}${ROS_OE_RELEASE_SERIES_SUFFIX}"

# Don't add the libc variant suffix to TMPDIR.

TCLIBCAPPEND := ""

# As recommended by https://www.yoctoproject.org/docs/latest/mega-manual/mega-manual.html#var-BB_NUMBER_THREADS

# and https://www.yoctoproject.org/docs/latest/mega-manual/mega-manual.html#var-PARALLEL_MAKE:

BB_NUMBER_THREADS ?= "${@min(int(bb.utils.cpu_count()), 20)}"

PARALLEL_MAKE ?= "-j ${BB_NUMBER_THREADS}"

# Reduce the size of the build artifacts by removing the working files under TMPDIR/work. Comment this out to preserve them

# (see https://www.yoctoproject.org/docs/latest/mega-manual/mega-manual.html#ref-classes-rm-work).

INHERIT += "rm_work"

# Any other additions to the file go here.

# EXTRA_IMAGE_FEATURES is just one of the many settings that can be placed in this file. You can find them all by searching

# https://www.yoctoproject.org/docs/latest/mega-manual/mega-manual.html#ref-variables-glossary for "local.conf".

# Uncomment to allow "root" to ssh into the device. Not needed for images with webOS OSE because it implicitly adds this

# feature.

# EXTRA_IMAGE_FEATURES += "ssh-server-dropbear"

# Uncomment to include the package management utilities in the image ("opkg", by default). Not needed for images with

# webOS OSE because it implicitly adds this feature.

# EXTRA_IMAGE_FEATURES += "package-management"

# Uncomment to have all interactive shells implicitly source "setup.sh" (ROS 1) or "ros_setup.sh" (ROS 2).

# EXTRA_IMAGE_FEATURES += "ros-implicit-workspace"

# Uncomment to display additional useful variables in the build configuration output.

# require conf/distro/include/ros-useful-buildcfg-vars.inc

BB_DISKMON_DIRS ??= "\

STOPTASKS,${TMPDIR},1G,100K \

STOPTASKS,${DL_DIR},1G,100K \

STOPTASKS,${SSTATE_DIR},1G,100K \

STOPTASKS,/tmp,100M,100K \

ABORT,${TMPDIR},100M,1K \

ABORT,${DL_DIR},100M,1K \

ABORT,${SSTATE_DIR},100M,1K \

ABORT,/tmp,10M,1K"

# vvvvvvvvvvvvvvvvv In the future, tools will expect to find this line.

# ROS-ADDITIONS-END

Notice that just in case I made two changes from the instructions. First I set the machine in the conf/local.conf file way upper with

MACHINE ??= "qemux86"(notice qemux86-64 is not supported for ROS1)

and second I moved the

BB_DISKMON_DIRS ??= "\

STOPTASKS,${TMPDIR},1G,100K \

STOPTASKS,${DL_DIR},1G,100K \

STOPTASKS,${SSTATE_DIR},1G,100K \

STOPTASKS,/tmp,100M,100K \

ABORT,${TMPDIR},100M,1K \

ABORT,${DL_DIR},100M,1K \

ABORT,${SSTATE_DIR},100M,1K \

ABORT,/tmp,10M,1K"to the end of the file, just as a precaution since it seems BB_DISKMON_DIRS depends n DL_DIR.

Then I was ready to build the image

Building the image

To run the image I did

source openembedded-core/oe-init-build-env

cd -

bitbake -k ros-image-coreI was sleepy and didn't want my building to stop so I used the -k option.

Voila! Done! right?? 🙂

Wrong! 🙁

Actually running the image

This is the strange thing. The instructions do not include how to run the image (I suppose they think it is just too simple... ouchh) and neither they specify clearly how to add additional packages (but that is for another blog). In fact I have not tried yet how to add meta-ros to an existing Open Embedded project. (More future work!)

Anyway first I tried

runqemu qemux86-64 ros-image-coreand it failed completely. Something about

runqemu - ERROR - Failed to find /opt/yocto/ros_workspace/tmp/deploy/images/qemux86/ros-image-core-qemux86.qemuboot.conf (wrong image name or BSP does not support running under qemu?).

Yeah I could see that that file did not exist, but a similar one did, so I tried that name (the -d is to show debug messages too)

runqemu -d qemux86 ros-image-core-melodicand yep! it passed that part. Mmmm strange. This is not written anywhere!!.

In a previous version of the dockerfile it failed in another part

runqemu - ERROR - runqemu-ifup: /opt/yocto/ros_workspace2/openembedded-core/scripts/runqemu-ifup

runqemu - ERROR - runqemu-ifdown: /opt/yocto/ros_workspace2/openembedded-core/scripts/runqemu-ifdown

runqemu - ERROR - ip: None

runqemu - ERROR - In order for this script to dynamically infer paths

kernels or filesystem images, you either need bitbake in your PATH

or to source oe-init-build-env before running this script.Reading the runqemu script I could see that a which command was failing to find the ip command. So I did

sudo apt update && sudo apt install iproute2but with the new Dockerfile written above this error does not happen anymore.

With this I could do

runqemu -d qemux86 ros-image-core-melodicand got my beautiful ROS image running

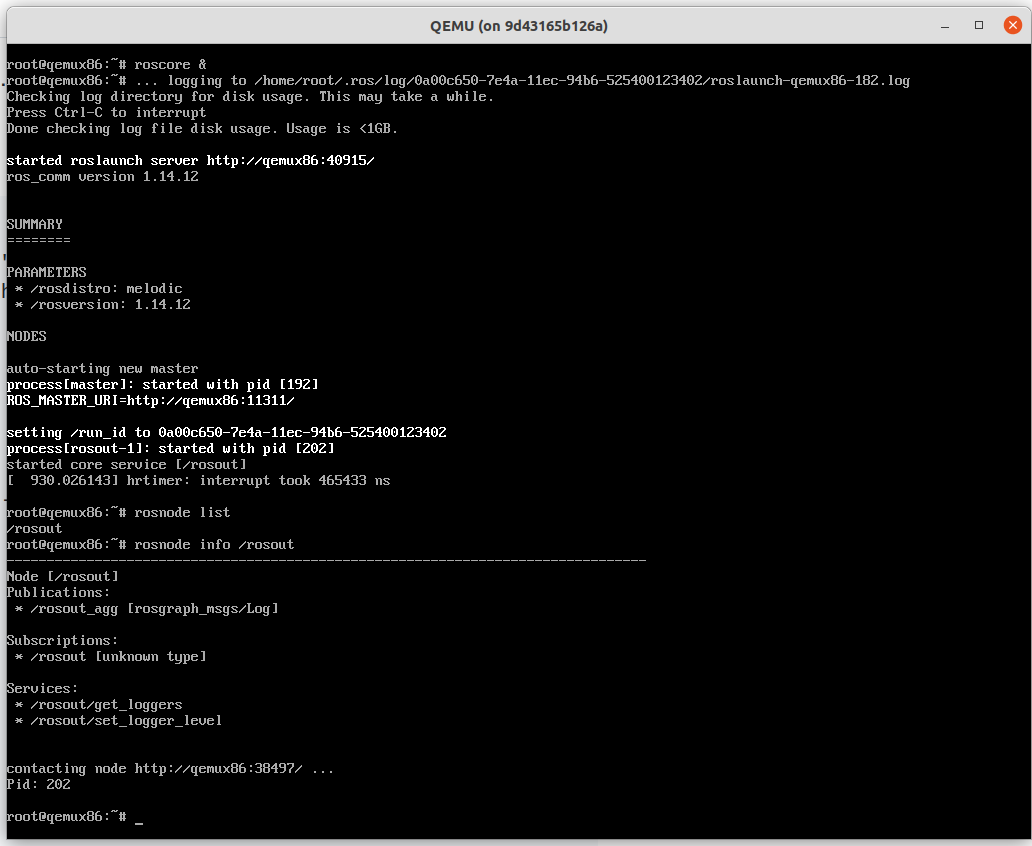

Some "Sanity test"

As recommended I did some sanity test.

source /opt/ros/melodic/setup.sh

echo $LD_LIBRARY_PATH

roscore &

rosnode list

rosnode info /rosoutand then run the very basic program found in this article in japanese which is a python program

## coding: UTF-8

import rospy

rospy.init_node('hello_world_node') # 引数で与えられる名前のノードを生成

rospy.loginfo('Hello World') # ROSネットワークに文字列送信

rospy.spin() # 無限ループ開始and then

python hello_world.pyand I got

[INFO] [1561530970.398307]: Hello WorldThings to do from now on

Not everything is good in the land of Yocto ROS

First, for some reason catkin does not work well. I wonder if the reason is because the image is roscore minimal. Apparently I have to add the catkin-dev package to the build. However after I did, even though catkin_init_workspace seems to work, catkin_make doesn't. And the environment does not have a C++ compiler installed!

So it is a matter to work on this.

But that is work for another day!

[^1]: Btw, there is an article on building a ROS2 image written in japanese here but be warned that first it is done natively and second the branch it uses does not exist anymore apparently.

投稿者プロフィール

- Engineer from the Software Development Team. I've been in Kyoto since when I was a post grad student in the Graduate School of Informatics. Interested in A.I, Robotics and Software, early this year finished the Self-Driving Car Engineer Nanodegree Program and always eager to learn new fields.