Introduction

Hi everyone, Rusty Punzalan here. I am originally from the Philippines and first came to Japan for graduate school. I am currently a member of the Advanced Driving Technolog Department at SenseTime Japan. I have been at the company for three years now. Please check out an article I wrote about testing machine learning application systems below.

Transitioning from a researcher into software engineer have taught me to appreciate the value of tests in software development. During years of doing research, first as a student and later as post-doc, the emphasis is always on presenting new ideas and publishing papers. The output software is usually treated as proof of concept. As long as it works and can reproduce experimental results, minimal or no resources are spent for its testing and maintenance. Things work out a bit differently once outside the confines of academia.

Concepts about software quality and maintainability immediately became clear to me after switching to a software engineering job. It quickly became apparent that design and implementation are just the initial steps in creating a reliable software system. Creating effective tests goes a long way into ensuring a robust, secure and high performance software.

Software testing is a complicated process but there are added layers of complexity when dealing with machine learning models. The following blog post details some of the experiences I had with designing, writing and maintaining tests for machine learning application systems, specifically, systems that use deep neural network (DNN) models.

Machine Learning Application Systems

A fascinating blog post I have read discussed effective testing for machine learning systems and how it is different from testing traditional software systems. It mentions that in a traditional software system, code is written to get the desired behavior. On the other hand, machine learning systems involve providing desired behavior to get the logic of the system. It then provides some strategies for testing machine learning systems. Another point of view I can add to this is the experience of working with machine learning application systems, where machine learning models act as one of its components. Other parts of the system may include sensor data acquisition, post-processing algorithms and communication interface.

|

|---|

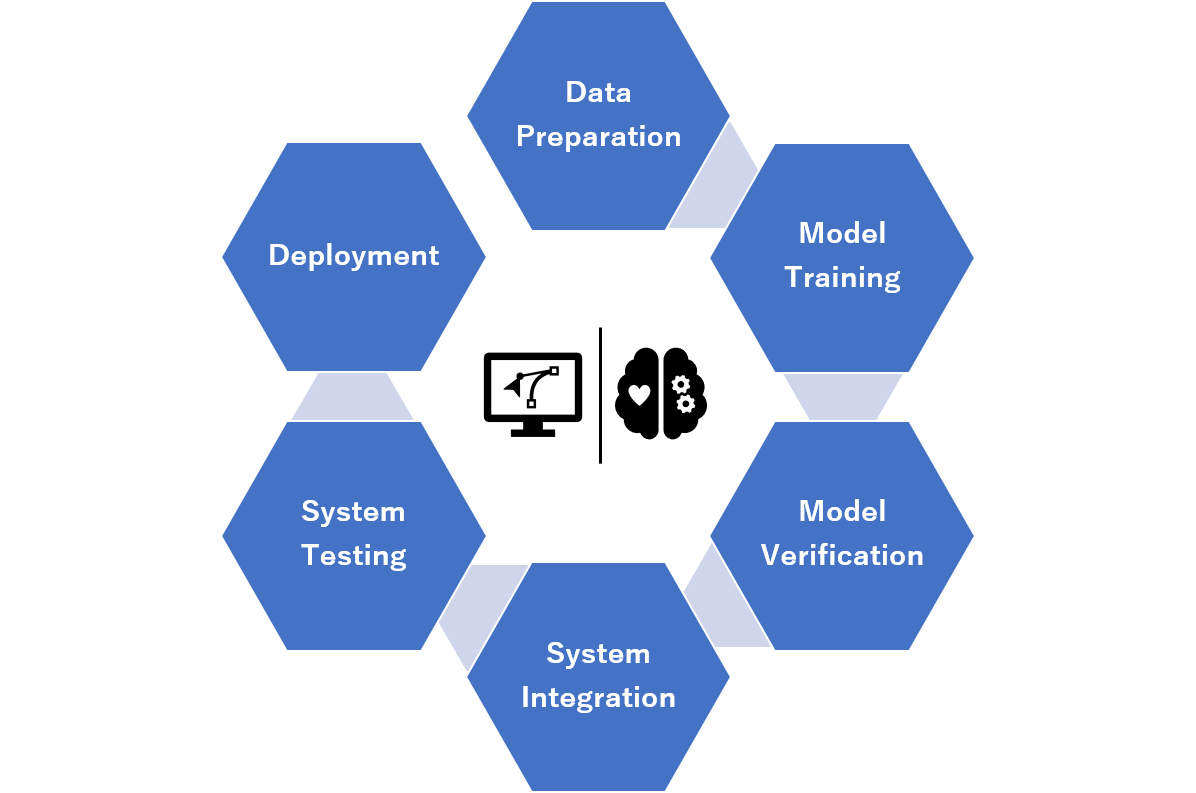

| Fig. 1. Development cycle for machine learning application systems. |

In machine learning application software, a single or multiple DNN models are embedded into the system and provide its main logic. The other parts include input and output handling, post-processing and optimization, communication with other systems, and multiple sensor data acquisition. System testing involves ensuring that the written and learned logic both consistently produce the desired behavior. This also includes testing in different deployment environments such as in the cloud, dedicated AI computers and embedded platforms.

System Test Creation Flow

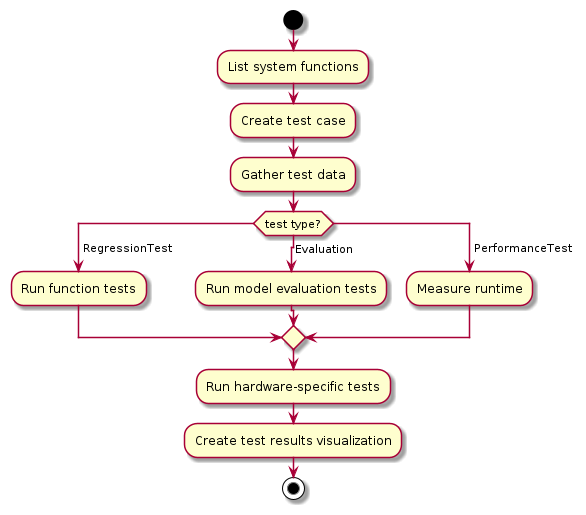

Testing for machine learning application systems, as with traditional systems, involves listing the individual functions of the system and creating scenarios to test those functions. A clearly defined list of functions aides in optimizing the creation of test cases and deciding which kinds of data are needed.

Once the functions are set and there is a list of test cases, test data needs to be gathered and, if necessary, annotated for ground truths. Testing machine learning functionalities entails making sure that subsets of data are represented to avoid subtle failures. One example for this is making sure that the test data includes corner cases that might not be sufficiently represented during DNN model training. The following figure shows a test creation and execution workflow.

|

|---|

| Fig. 2. System test creation and execution flowchart. |

Another step is to ensure that the system works in the test environment as well as in actual deployment. Model training can be done in development servers, system development in individual desktop PCs and deployment in dedicated AI computer platforms or embedded systems. Each of these software environments can have different underlying hardware and firmware such as different GPU architectures. Ensuring that the behavior and output are consistent for all environments is important for software reliability.

Things to Consider When Creating Tests

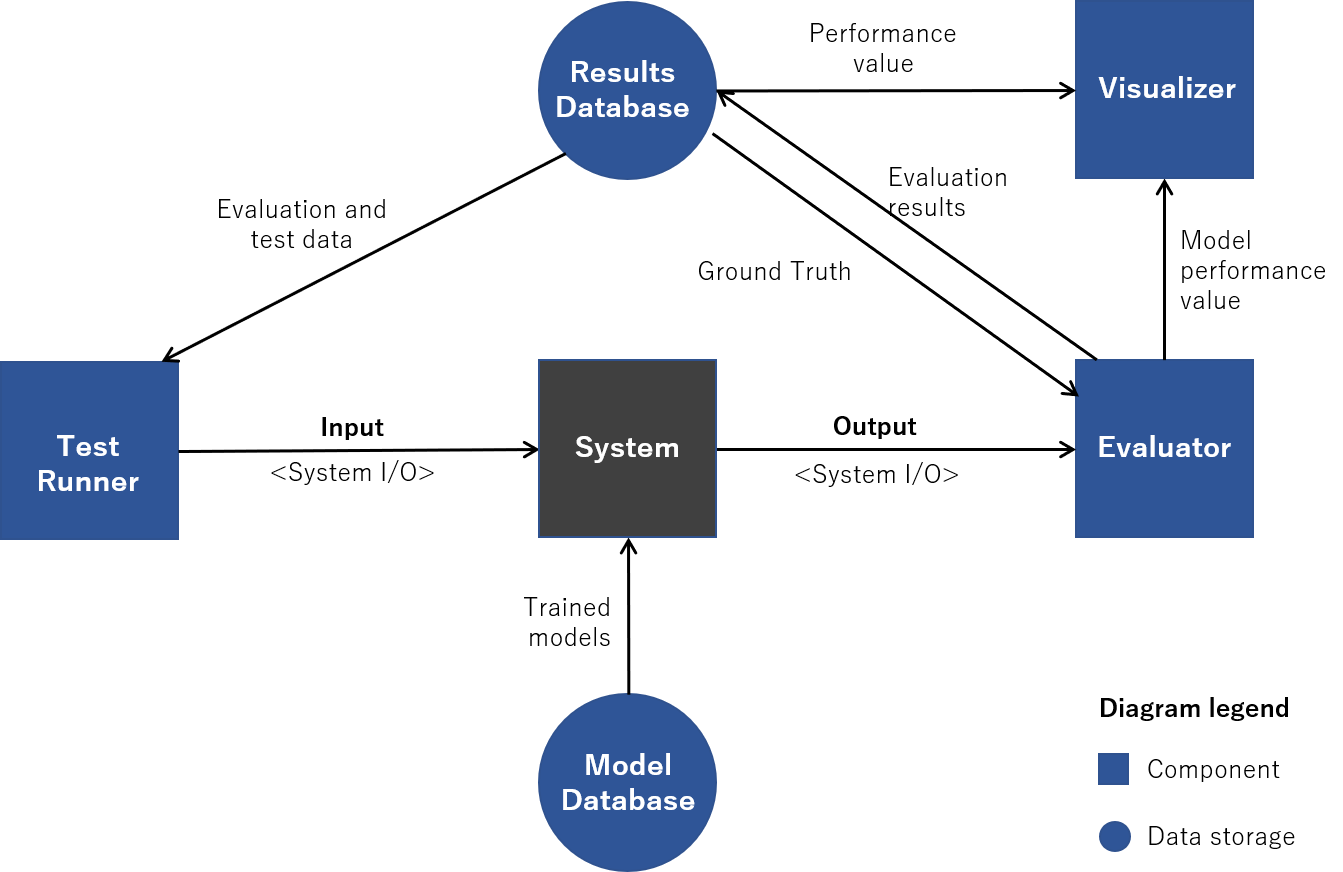

Designing tests for machine learning systems involves considering some aspects of the testing environment. A test architecture needs some components to run effective and automated testing. Figure 3 describes a machine learning system test architecture. The test runner component handles system setup and execution as well as test and evaluation input. The evaluator parses the system output and analyses test results. It then feeds the analysis results into a visualizer for easier interpretation. Finally, the databases are there to store the DNN models and test results.

|

|---|

| Fig. 3. System test architecture. The system is treated as a black box and the DNN models are integrated inside. Input and output are achieved using the system interface. |

1. System Input and Output (I/O)

Inputs and outputs are one of the first things that should be considered when designing system tests. For many machine learning systems like those with object detection, this may include image files or videos as inputs and detected objects lists as output. Text files such as comma-separated value (CSV) or JSON files may be used as inputs or outputs in the tests. Different tests may need different inputs depending on the functionality being tested. It is important that test inputs be flexible to allow for different kinds of test scenarios.

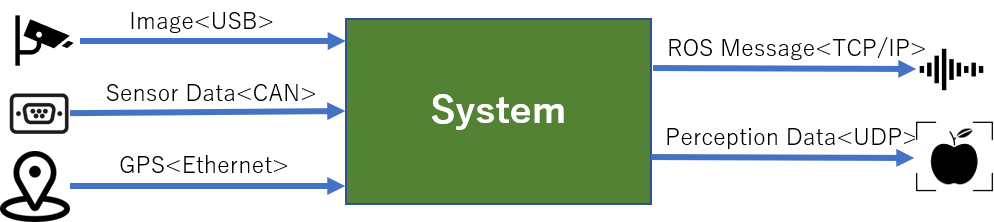

2. Communication Interface

How the test inputs and outputs are sent and received from the system also needs consideration. This is relatively easier to determine since it is dictated by how the system communicates and interfaces with other software. The way a system communicates determine what kind of tools you might need for testing. If the system uses Robot Operating System (ROS) as interface, then you may want to use its myriad of available packages and tools. Some interface like User Datagram Protocol (UDP) may mean creating you own tools to process inputs and outputs.

|

|---|

| Fig. 3. Fig. 4. System input and output examples and their data interface. |

3. Hardware and Software Capabilities

Another thing to consider is the hardware capability of the machine in which the system runs on. DNN models are especially heavy in terms of computing requirements. This is more apparent if they need to run on embedded systems. Running as many test scenarios as possible needs to be balanced with other variables such as how long it takes to run each test or load a model inside the system. One benefit of system tests is finding bugs in the software and this may be difficult to do if the tests take days to run.

Lessons from the Testing Room

Testing large machine learning application systems is no easy task. However, there are some steps that can make designing and creating tests relatively easier. Based from my experience working with machine learning applications, the following are some tips that might help test engineers.

Use a Test Framework

There is usually no need to reinvent the wheel in terms of creating test frameworks. There is at least one for each major programming language and many of them are mature and well-maintained enough for production. The only question is personal preferences and which programming language you are most comfortable with.

In one of my previous jobs, system testing was done using lists in text files and shell scripts. The test logic was very hard to follow and makes it hard to maintain test code. Now, there are different test frameworks that can help you automate your tests. I have recently used Pytest because it is easy to use and allows new members of our team to quickly understand the test logic. It is also compatible with the Python Unittest package so it makes it easier to create test scenarios.

|

|---|

| Fig. 5. Using available system test frameworks and tools like Pytest and Unittest can help automate system tests. |

There are other test frameworks out there and it would be smart to try some of them to know which one fits your testing needs.

Conduct Regular Code and Design Reviews

Regular code reviews are beneficial for improving code quality. A different set of eyes usually detects code smells that may have been missed by the coder. For the reviewer, benefits may include learning new programming techniques and getting familiar with a different part of the system. These can help developers in detecting possible sources of bugs even before actual testing is done.

Design reviews, on the other hand, offers a change for the team to improve overall system performance and maintainability. Software teams should dedicate a portion of their development time to do improvements through refactoring and system design changes. In the case of machine learning systems, there are typically different types of models, sensor inputs and interface outputs where regular design reviews may help in optimizing system performance.

Archive Test Results

Evaluating the performance of each functionality in the machine learning system should be part of system tests. This is especially crucial for large systems with multiple models since these models may interact with each other in unforeseen ways. In addition, it is recommended to archive the test results to track the individual function performance as models are upgraded and the system changes.

For storing the system test results, it would be better to use a database for handling large amounts of data. It would also be helpful for analysis if a visualizer is used to compare test results.

Conclusion

System testing is an important part of machine learning application development life cycle. When properly done, it can help ensure a robust, secure and high-performance system. Its design and implementation is complicated but following some of the steps described above may help in creating effective system tests.

Throughout this blog post, I’ve presented some thoughts on how to design and create system tests. A test creation workflow can help test engineers in making sufficient test scenarios. There are also things to consider when designing tests like system I/O, communication interface and hardware capabilities. Use of external tools like test platforms and storage database is encouraged to automate testing as much as possible.

This post is by no means a complete and comprehensive guide. If you have experience testing machine learning application systems, please don’t hesitate to reach out and share some of the lessons that you’ve learned.

References

投稿者プロフィール

- Software engineer, has PhD in Bioinformatics. Part-time househusband who likes cooking and travel.